Click to learn more about author Paul Stanton.

This is Part 2 of the “Docker Containers and Database Cloning for DBAs, Data Governance, and IT Decision Makers” Series.

Part 1 of the series is at: Docker Containers and Database Cloning for DBAs, Data Governance, and IT Decision Makers: An Introduction, it provided an introduction to SQL containers, and common strategies for use.

In this installment we’ll look at practical methods used to apply SQL Server containers for support of Dev/test, reporting and BI. In part 3 and 4 we’ll look at customer case studies and how containers apply to Data Governance.

Data on Demand: Self-Service Terabyte Class Data in 45 Seconds

The most popular use of containers is for self-service support of developers and QA. DBAs build a secure SQL Server images that includes one or multiple databases, and users can self-service environments as needed.

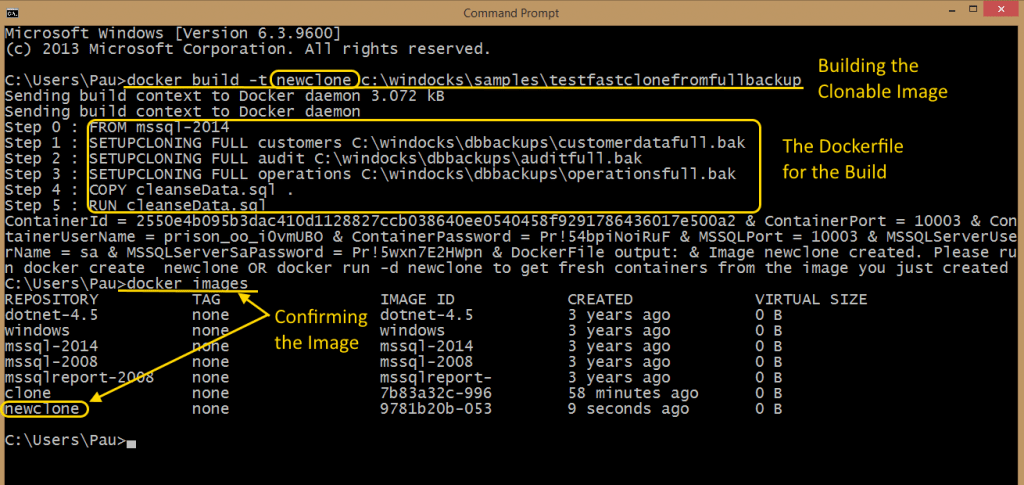

The process begins by building an image using a Dockerfile, which is a plain text configuration file. In this example the image is composed of three databases, which are restored from a full backup. SQL Server scripts are copied and run during the image build, to implement user/group permissions, data masking, and encryption.

The image is built using a Docker command that references the directory path of the Dockerfile (see image below). Files included in the directory are copied to the container engine, so don’t include the target databases in this folder! Backups in this example are located in a separate directory, and the clone images (Virtual Hard Drives) are built in this directory. The Docker build is illustrated below, followed by the Dockerfile context. The client return string includes details of the build, and the new image “newclone” is confirmed by running a >docker images command.

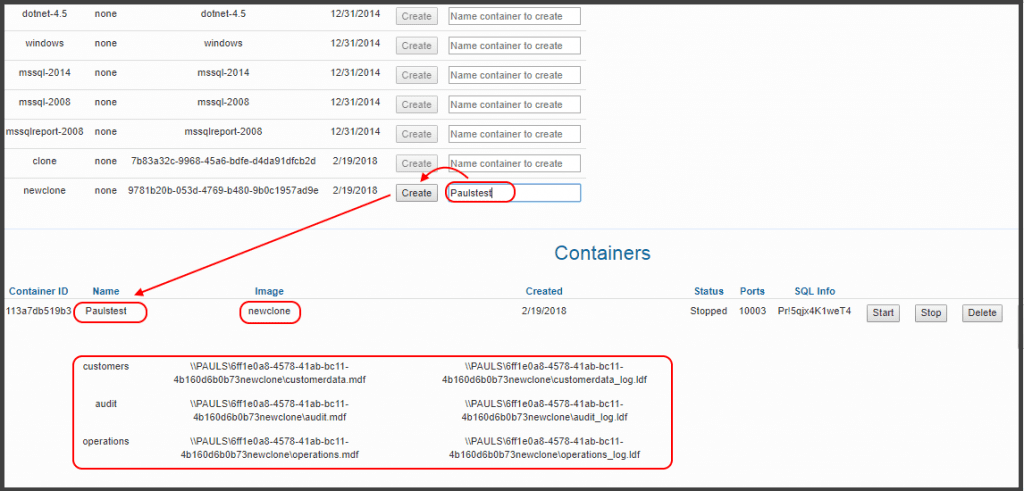

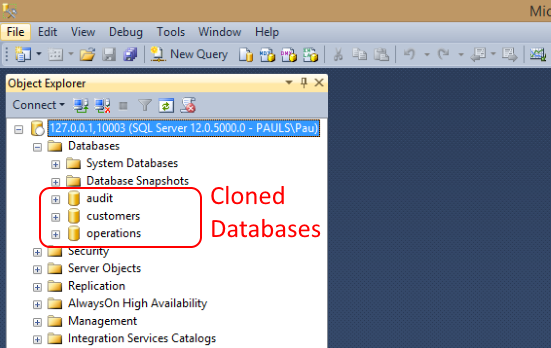

With the image available, users can provision SQL Server containers with the cloned data through the web UI. The container is easily started, and accessed at the host IP address and port. For SQL Server Management Server running locally the iP is the loopback address (127.0.0.1,10003).

Deliver Cloned Data to Docker SQL Server Containers and Instances

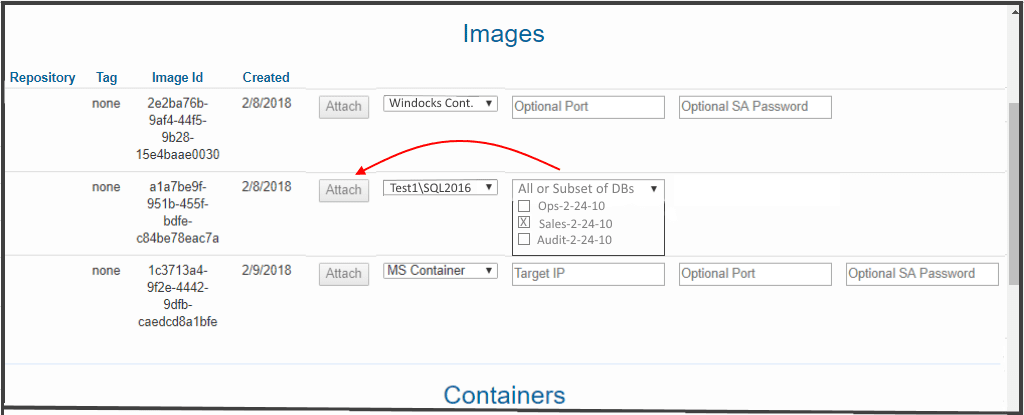

SQL Server containers offer great appeal for their speed and resource usage, with users realizing an average reduction in VMs used of 5:1. Most organizations adopt containers gradually, and need to support both containers and conventional SQL Server instances. This is straightforward with a web UI that provides users the ability to deliver cloned databases on Dockers SQL Server containers or conventional instances. In the example the user targets delivery to a conventional SQL Server instance (\\Test1\SQL2016), and selects a subset of the databases included in the image.

Configuring SQL Server Containers with Scripts

One of the challenges in working with containers is the need to configure the container for use. A freshly minted container isn’t useful if it doesn’t support databases that include TDE encryption, or database mail, and a myriad of other configuration options. These configuration settings are addressed by running SQL Server scripts.

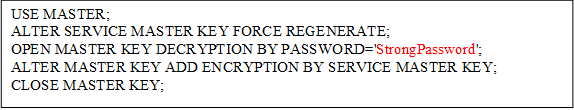

The order that scripts are run is important for some configurations. For example, a data image that includes SQL TDE encryption requires the container be enabled with the encryption key and certificate prior to mounting the TDE enabled databases. Windocks containers are created by cloning a SQL Server instance on the host, and inherit encryption certificates and other Master database configuration settings. But, the encryption certificate needs to be “regenerated” in order to be enabled for a freshly minted container.

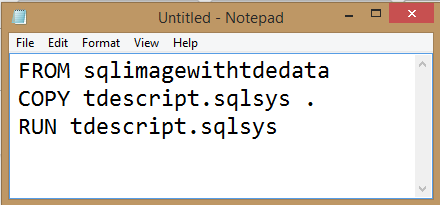

Windocks addresses the need for controlling the order of scripts with file extensions. Scripts with the .sqlsys extension are run before databases are mounted, and scripts with .sql extensions are run afterwards. In the case of TDE support, a script refreshes the encryption certificate on the container, and makes it ready for work with TDE based databases.

The script is incorporated into the container build in a Dockerfile as shown. Even though the image includes the mounting encrypted databases, the scripts with .sqlsys file extensions are run on the container prior to database operations. The result of the dockerfile below is a container with a regenerated encryption certificate, and with mounted and attached databases from the image.

Conclusions and Next Steps

Containers combined with database cloning is a powerful combination for modernizing relational database workflows. Terabyte class data environments can be easily built by DBAs, and made available for development, test, QA, and reporting and BI. Users can access the images using simple web portals, and have access to data in less than a minute. These technologies run wherever Windows servers are supported, including all public clouds or on premise infrastructure.

Even where containers aren’t needed, database cloning should be useful for a mix of use-cases, and supports use with Microsoft’s official Docker containers as well as conventional instances. To learn more about SQL Server containers and database cloning visit the following links:

Microsoft SQL Server containers: https://docs.microsoft.com/en-us/sql/linux/sql-server-linux-setup

Windocks SQL Server containers: https://windocks.com/lps/itexec-docker-windows