Companies pour a lot of effort into the Business Intelligence and Analytics (BI/A). In late 2013, Gartner predicted that the importance of BI/A projects for CIOs will continue to grow well into 2017 and beyond. Despite all the effort and attention on analytics though, companies are still struggling to succeed with Big Data. In a late 2014 survey, it was found that only 27% of Big Data projects succeed, while only 13% reach full production use. In a 2015 research report on Business Intelligence and Data Science by DATAVERSITY®, the problems of leveraging such assets were examined in detail: only 17% of survey respondents said they had a well-developed Predictive/Prescriptive Analytics program in place, while 80% said they planned on implementing such a program within five years. Such trends are not sustainable for any enterprise and that is where Data Science comes in. How to properly execute such a program remains a conundrum for many enterprises.

Companies pour a lot of effort into the Business Intelligence and Analytics (BI/A). In late 2013, Gartner predicted that the importance of BI/A projects for CIOs will continue to grow well into 2017 and beyond. Despite all the effort and attention on analytics though, companies are still struggling to succeed with Big Data. In a late 2014 survey, it was found that only 27% of Big Data projects succeed, while only 13% reach full production use. In a 2015 research report on Business Intelligence and Data Science by DATAVERSITY®, the problems of leveraging such assets were examined in detail: only 17% of survey respondents said they had a well-developed Predictive/Prescriptive Analytics program in place, while 80% said they planned on implementing such a program within five years. Such trends are not sustainable for any enterprise and that is where Data Science comes in. How to properly execute such a program remains a conundrum for many enterprises.

Companies that want to make their Data Science projects succeed should pay attention to the advice John Akred of Silicon Valley Data Science (SVDS) gave in his “Coaching the Data Science Team: Running Data Projects” presentation at the CDOvision 2015 Conference. The advice comes from real-world experience building and delivering Data Science projects. His company had a strong motivation to find an effective approach: “We do this under contract, so we can get sued when our projects don’t work.”

Organizing a Team and Organizing the Project

According to Akred, a team approach is necessary. SVDS teams include data scientists, data engineers, architects, designers, and data visualization members. They begin their project work by identifying the business goals of the project, creating a hypothesis about how they can use data to satisfy those goals, and work iteratively to create tangible results.

Akred emphasizes the need to focus on what you do with data, as opposed to what you do to data. They organize the project around their hypothesis of what they want to do with the data—attract new customers, for example—not the process of cleaning, validating, controlling, and protecting data.

Those data manipulation tasks are important, Akred agreed, but he said:

“Oftentimes we’re embarking on an experimental journey and to worry about all of the fine art of Data Management before you even know if you can do something useful thwarts the process of learning.”

Methodology Options

One of the most popular methods for Data Science projects, according to a KDNuggets poll is CRISP-DM. Akred pointed out that CRISP, which stands for Cross Industry Standard Process for Data Mining, lacks a test phase. “Your ability to do great harm with data is as profound as your ability to do great good with it in today’s world,” he said.

Data Science projects are ultimately software projects:

“How might we take some of the lessons of software engineering around discipline, around building systems that are reliable, that are predictable, and bring them to how we do Data Science and data-driven projects?” Akred asked.

The software engineering methods Akred looked at include Waterfall, Agile, SAFE, and Scrumfall. Akred saw a role even for traditional methods in some data projects:

“The good old-fashioned waterfall process works great if you’re building out a SAP installation. SAP is a very complicated piece of software, but it’s a well-understood piece of software with predictable processes you go through as you build it.”

But Waterfall isn’t applicable to many Data Science projects that are “an inherently speculative endeavor,” as Akred described them. For those projects, the detailed planning of Waterfall only provides the illusion that the risk is controlled. Instead, Agile project methods—with some modifications for Data Science—are more appropriate.

Agile for Data Science Projects

The benefit of Agile is that there’s a plan, but the plan allows taking detours. “If I’m flexible I might learn something on that detour that ultimately helps me be more successful,” Akred said.

They start by defining success in terms of the business value the project will deliver. The project starts with a charter, which defines the reasons the project is being undertaken—for Data Science projects, those reasons document investigation themes. The work on an investigation theme can include several kinds of exploratory analysis.

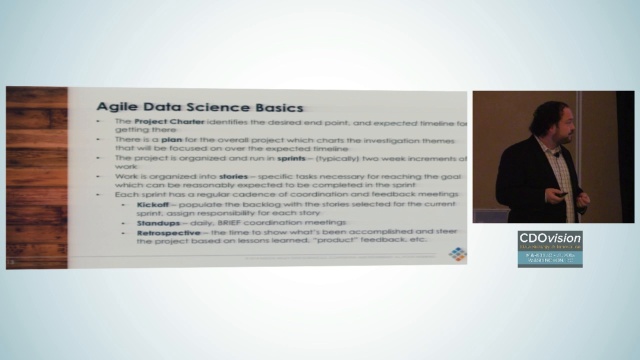

“The project charter identifies the desired end product and expected timeline of getting there,” Akred explained. “There is a plan for the overall project which charts the investigation themes that will be focused on over the expected timeline.”

The timeline is broken down into sprints, which plan for deliverables in two-week increments. The work for a sprint is documented as stories, which describe the specific tasks to be completed in the sprint. Every sprint begins with a kickoff meeting, where the project backlog is populated with the stories assigned to the current sprint. There are daily standup meetings throughout the sprint, allowing for communication and coordination. A sprint ends with a retrospective, where the accomplishments are shown and lessons learned are applied to the guide the project’s future work.

Solve the Problem Before Building Product Features

Because the work on a Data Science is exploratory, the early sprints are focused on demonstrating that it’s even possible to solve the problem. “Understand what is controversial or poorly understood about what you’re trying to do, solve those problems, and answer the question first, then build the thing later,” Akred said. In practice, that means they solve the data analysis problem “long before we begin to build the application, mock up the user interface, and things like that.” If the proposed solution turns out to be infeasible, this means minimal effort has been wasted. Organizing work around the hypothesis this way provides a good means of tracking how the project work is progressing.

Along with providing a good means of tracking work, this method provides a means to communicate with stakeholders. “You have the opportunity to prioritize which of these hypotheses you’re testing based on how other things are going on the project,” Akred said. Changing priorities means moving items between the current sprint plan and the backlog. “Now we’re having a real conversation about tradeoffs rather than simply being asked to do more in the same amount of time.”

Anatomy of a Sprint

Sprints last about two weeks. They start by planning out what they’re going to work on over the next two weeks. The plan is tentative, not committed.

“We don’t know exactly how long things will take. It’s not micro Waterfall, but we put in enough so the team will stay busy and enough we can take advantage of opportunity if we get things done fast,” Akred said.

Each morning the team meets for a standup meeting. The purpose of the standup is a brief meeting where they discuss what was completed yesterday, what’s planned for today, and what’s blocking progress. This meeting doesn’t require advance preparation, and they don’t try to solve problems during the meeting.

At the end of the sprint, the team holds a retrospective with the stakeholders to demonstrate progress and assess the value of the work. “You give demos so your stakeholders can go, ‘That’s exactly what I asked you for. Totally don’t want it.'” That happens all the time, Akred said. “Sometimes in the Waterfall world this happens after you’ve been working for two years, which is a serious bummer, to use the technical term,” he continued.

The Experimental Enterprise

By working in this way, Data Science projects can support what Akred referred to as “the experimental enterprise.” This is “an enterprise that’s oriented and organized around fast experimentation to understand, learn, and improve the operations of the company.”

Structuring Data Science projects as a Data Science layer atop Agile, APIs, Cloud, DevOps, and open source lets them conduct investigations while also preparing for production. The solid infrastructure combined with Agile lets the Data Science team conduct, observe, and react to their experiments, achieving the business goal and delivering value to their clients.

Register for the CDOVision 2016 Conference today!

Here is the video of the CDOVision 2015 Presentation: