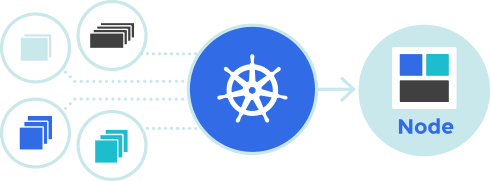

Kubernetes, or K8s for short, is an open-source system for automating, deploying, scaling, and managing containerized applications. Initially internal to Google Cloud Platform, Kubernetes is becoming widely adopted across the industry. It addresses two issues.

Resources needed to run an API (Application Programming Interface) development may be spread across different hardware, clouds, and teams in different physical locations. Also, to engineer software well, by using Agile Software Development, building effective applications requires breaking them down into smaller pieces and releasing these modules more frequently. Kubernetes combines the benefits of both worlds, cohesively tying together multiple physical and virtual containers and components working off a load balancer, allowing for quicker iterative software development.

[dv-promo buttontext=’SIGN UP FOR OUR WEEKLY DATA MANAGEMENT NEWSLETTER’ buttonurl=’https://www.dataversity.net/subscribe/?utm_source=dataversity&utm_medium=inline_ad&utm_campaign=DM_weekly_temp2&utm_content=copy2′]

Each Kubernetes container cluster comprises a pod. Pods share namespaces (IP and Volume) and form a scheduling unit. A more detailed visual can be seen here. Although Kubernetes has matured as a technology, it requires sophisticated expertise and effort to install and maintain. Given this, several vendors have container-as-a-service (CaaS) offerings to deploy and maintain Kubernetes. As long as modular programs remain consistent with the API, Kubernetes provides a reliable means to deliver and update functionality.

Other Definitions of Kubernetes Include:

- “A container clustering platform that Google has been using internally for many years before turning it into an open-source project in 2014.” (Pete Johnson)

- “A powerful open-source system, initially developed by Google, for managing containerized applications in a clustered environment.” (DigitalOcean Inc.)

- “A container management platform” that “automates the creation and placement of containers in order to support higher-level services.” (Gartner)

- “Container orchestration software – essentially, plumbing for running enterprise-class software in the cloud.” (Forbes)

- “An open-source ecosystem for automating the deployment, scaling, and management of containerized applications.” (TechRepublic)

Kubernetes Use Cases Include:

- Updating a legacy application, written in a Linux shell, without having to maintain separate development and production environments

- Exploiting 170 different cloud-native services in IBM’s catalog.

- Deliver new services faster and provide more value to National Association of Insurance Commissioners (NAIC) members and staff

Businesses Use Kubernetes to:

- Integrate hybrid cloud/cross-cloud development efficiently

- Manage developing software applications that rely on microservices’ methodology

- Make it easier to develop extended functionality and make changes to existing programs

- Automatically roll out and roll back functionality changes

- Replace failing functionality quickly, with minimal disruption to current processes

- Utilize computing resources more effectively