When setting up an Apache Cassandra table schema and anticipating how you’ll use the table, it’s a best practice to simultaneously formulate a thoughtful compaction strategy. While a Cassandra table’s compaction strategy can be adjusted after its creation, doing so invites costly cluster performance penalties because Cassandra will need to rewrite all of that table’s data. Taking the right approach to compactions – and doing so as early on as possible – is a key determining factor in efficient Cassandra operations.

The Write Path

Understanding how Cassandra writes data to disk makes the importance of efficient compactions clear. Let’s take a look at the write path process:

- Cassandra stores recent writes into memory in the Memtable.

- After a certain number of writes have occurred, Cassandra sends all Memtable data to disk. This data on disk is stored in Sorted String Tables (SSTables): simple data structures that are sorted arrays of strings.

- Cassandra merges and pre-sorts Memtable data by Primary Key before writing a new SSTable. The Primary Key includes a unique Partition Key responsible for determining the node where the data is stored, and any defined Clustering Keys.

- The SSTable is then put to disk in a single write operation. Because SSTables are immutable and not modified once written to disk, any data updates or deletions are written to a new SSTable. In scenarios where data is updated often, Cassandra may have to read from multiple SSTables to retrieve just one row.

- Compactions clean up this environment, merging data into new SSTables to free disk space and optimize read operations.

Which Cassandra Compaction Strategy Fits Your Use Case?

Cassandra offers four compaction strategies suited to various use cases:

Size Tiered Compaction Strategy (STCS)

With this default strategy, a compaction is performed when multiple SSTables are of similar size. Parameter settings can tune this strategy to control the number of compactions and how tombstones are handled. This strategy is a good fit for general or insert-heavy workloads.

Leveled Compaction Strategy (LCS)

This compaction strategy puts SSTables into levels. Each level has a fixed size limit that’s 10 times larger than the previous. Size-wise, SSTables are relatively small, with 160MB as their default. Take a scenario where Level 1 contains a maximum of 10 SSTables: Level 2 would then have a maximum of 100 SSTables. When Level 1 becomes full, all new SSTables added to Level 1 are compacted with existing tables that have data overlaps. If those compactions cause Level 1 to have more tables than are allowed, the excess tables go to Level 2, and so on. SSTables therefore cannot overlap within a level.

Use this compaction strategy to handle read-heavy workloads. Because each level’s tables don’t overlap, this strategy ensures that 90% of reads can be completed using just one SSTable. This strategy is also a strong choice for workloads with more updates than inserts.

Time Window Compaction Strategy (TWCS)

This strategy is similarly designed for time-series data, serving as an updated improvement on the previous strategy. Here, SSTables are compacted within a set time window by leveraging STCS. At the end of that period, all SSTables are compacted into one, leaving a single SSTable for the time window. The time window that an SSTable belongs to is determined by its maximum timestamp. With TTL configured, this approach purges data by dropping whole SSTables once their time expires. Compacting SSTables solely during the set time windows prevents write amplification as well.

This approach is best used with time-series data that’s immutable after a fixed amount of time.

Date Tiered Compaction Strategy (DTCS)

This strategy (deprecated as of version 3.8) is specifically designed for time-series data, and stores data that was written in the same time period into the same SSTable. Groups of SSTables that were written in the same time window are compacted together as well. SSTables are configured to also include a Time to Live (TTL). SSTables older than the TTL are simply dropped and don’t contribute to compaction overhead.

While this strategy is efficient and performant, it only functions if the workload fits its narrow requirements. If a workload contains updates to older data or inserts that aren’t in order, this strategy isn’t designed to handle those aspects. In those cases, enlisting STCS and a time-based bucketing key to break up data may be the better approach.

Note: This strategy is deprecated in Cassandra 3.8 and later, and replaced by the Time Window Compaction Strategy (TWCS) on this list in those versions.

Cassandra Compaction Strategy Configuration

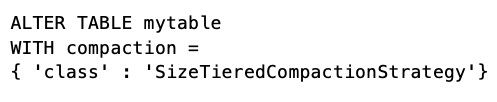

Now to put these methods into practice. You can configure compaction options at the table level using CQLSH, optimizing each table according to its optimal strategy. For example:

STCS is used by default if no compaction strategy is specified. To learn more about available options around each strategy, check out Cassandra’s compaction sub-properties documentation. As a recommended practice, it’s also wise to employ a squeaky wheel policy: Leave default options in place unless a table presents performance issues and only then make appropriate modifications.