Click to learn more about author Wayne Yaddow.

DataOps, which focuses on automated tools throughout the ETL development cycle, responds to a huge challenge for data integration and ETL projects in general. ETL projects are increasingly based on agile processes and automated testing.

ETL (i.e., extract, transform, load) projects are often devoid of automated testing. The lack of automated testing is usually due to 1) critical ETL testing functions that are not available on the market or open source, 2) the complexity of some ETL testing tools, or 3) the high cost of developing tools in-house.

Gartner Group has repeatedly confirmed that 70% or more of data integration, migration, and business intelligence initiatives fail on their first attempt. As businesses create more (and demand more) data than ever before, the failure rate is astounding.

This two-part blog series highlights:

- What makes DataOps processes valuable for ETL projects

- What are the driving forces for ETL testing automation

- Why it’s vital to approach test tools as a solution, not a one-off initiative

- Steps to get started on data testing automation

DataOps in a Nutshell

“DataOps is a process-driven, automated approach to data delivery and analytics. DataOps uses the agile approach between data owners and technical teams to improve quality while reducing cycle times. It borrows methods from DevOps to bring similar improvements. DataOps is not tied to any particular tool or technology.” (CloverDX.com)

DataOps is designed to create an automated workflow so that development and operations teams aren’t at odds during rollouts. Building a repeatable process for each deployment brings speed, consistency, and reliability to a task that had previously been tumultuous at best. There are now hundreds of ETL tools that claim to make development, deployment, and maintenance easy.

Another characteristic of DataOps is continuous integration and testing for Data Quality in all data pipeline lifecycle stages. Necessary steps include: (1) testing all data arriving from sources using unit tests and schema/SQL/streaming verifications, (2) validating data at each stage in the data flow, (3) capturing and publishing metrics, and (4) reusing test tools across projects. Among the advanced test tools for continuous data integration are Soda, SQL, and DbFit.

DataOps is designed as a way of collaborating and working across functions. Those who practice the DataOps methodology use various automation tools often referred to as “toolchains” – a set of tools that aid in developing, testing, delivering, monitoring, and managing data throughout the systems development lifecycle (SDLC) – all coordinated by the organization that uses DataOps practices. Toolchains fall into one or more of the following categories, which reflect the essential characteristics of an SDLC process:

- Development – preparation of data, continuous integration

- Configuration – Dev, QA, production infrastructure configurations, and management

- Deployment – continuous integration and version control

- Testing – continuous testing, monitoring, metrics, and automation tools

- Releasing – DB version control, change management, release approvals, release automation

- Production monitoring – Data Quality and performance monitoring

ETL Projects with Little Test Automation Are Not DataOps-Compliant

Manual ETL tests are performed step by step and are usually slow. The results tend to be expensive, as they require someone to create an environment and run the tests one at a time. Manual testing can also lead to human mistakes.

In the DataOps domains, there is a propensity to concentrate closely on tools that help automate testing. Beyond that, there is a looming predicament around how project quality assurance and specific tests fit into the lifecycles of DataOps products. How do teams get enough insight into the potential defects that small incremental changes introduce without slowing down the workflow? The tester’s response should be a practice of tailoring a testing strategy that represents common goals across project teams.

DataOps processes should automate testing to the extent possible while considering the IT technologies that were adopted for the project. Automated tests can be reliable, but their efficiency depends on the quality and extent of the tools and tests. In a DataOps-practicing enterprise, members of the project team spend 20% or more of their time planning and writing tests. Whenever a problem is encountered and corrected, new tests are added, after which regression tests should be run.

A primary advantage of automated ETL testing is that it’s easier to execute repeatedly and regularly. Manual testing is often too expensive and slow to run periodically. To ensure high quality, you must consistently and regularly test your data and ETL logic. (Check out “The DataOps Cookbook,” 2nd Edition.)

Automating the testing process itself can be challenging but at the same time critical for increasing development speed and reliability.

ETL test automation includes the following types of tests for data pipeline inputs, data transformations, business logic, and outputs:

- Unit tests – stand-alone tests of each component

- Functional tests – verifications against user requirements and technical specifications

- Regression tests – reruns of tests after code and data changes

- Performance tests – checking responsiveness under expected workloads

- Smoke tests – quick tests after data builds

- End-to-end tests – verify entire data pipeline process

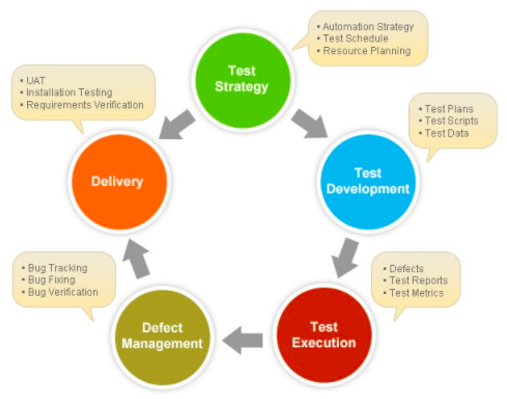

To support your development technologies, a well-planned set of test tools is a foundational component of DataOps. See Figure 1.

Figure 1: Components of a DataOps test strategy

A DataOps project-wide testing strategy is essential. The project testing strategy is a plan outlining how you will accomplish quality goals throughout the SDLC.

In DataOps, the goal is to deliver value to customers quickly; supporting IT activities with testing should, by default, be automated. But what is often missing is a “strategy.” How do you know that your automated testing will help accomplish your goal without a test strategy and associated plan?

The Case for Fast-Tracking to ETL Test Automation

“Test automation tools are essential elements of a DataOps toolchain. Those tools are enablers for achieving the continuous quality approach required for successful DataOps.” (Gartner Group’s Magic Quadrant for Software Test Automation)

Organizations are publishing new applications and updates faster than ever before – sometimes several times a day. Organizations often use manual ETL testing processes and inadequate tools to manage critical portions of their highly visible and customer-facing applications. And this translates into risks – risks to client loyalty, brand, and their confidential data.

Implementing a DataOps testing approach for ETL projects means automating testing for source and target datasets and ensuring they are up to date and correct. This can be very useful in dealing with a wide range of data sources and volumes (Figure 2). Your team will detect errors before they threaten the applications in production. And you’ll have more time to fix problems before you reach production where they become more problematic.

Figure 2: The variety of today’s data sources, targets, and associated platforms

Conclusions

Many data integration/migration teams have found that it is possible to be successful with multiple levels and types of automated ETL testing throughout their DataOps SDLC.

Automated testing will not replace all manual unit, component, and end-to-end testing on a DataOps project. However, the emphasis on automated testing will ensure that the more expensive manual testing is focused on high-risk, high-value activities.

Creating automated ETL tests is well worth the effort, especially in data warehouse and data pipeline projects. Automated tests can be run hundreds of times at a modest overall cost with greater accuracy.

Part 2 in this series will 1) feature a “roadmap” toward ETL test automation and 2) offer advice to help readers determine which ETL tests should be considered for automated testing.