Data lakes and semantic layers have been around for a long time – each living in their own walled gardens, tightly coupled to fairly narrow use cases. As data and analytics infrastructure migrates to the cloud, many are challenging how these foundational technology components fit in the modern data and analytics stack. In this article, we will dive into how a data lakehouse and a semantic layer together upend the traditional relationship between data lakes and analytics infrastructure. We’ll learn how a semantic lakehouse can dramatically simplify cloud data architectures, eliminate unnecessary data movement, and reduce time to value and cloud costs.

The Traditional Data and Analytics Architecture

In 2006, Amazon introduced Amazon Web Services (AWS) as a new way to offload the on-premise data center to the cloud. A core AWS service was its file data store and with that, the first cloud data lake, Amazon S3, was born. Other cloud vendors would introduce their own versions of cloud data lake infrastructure thereafter.

For most of its life, the cloud data lake has been relegated to playing the role of dumb, cheap data storage – a staging area for raw data, until data could be processed into something useful. For analytics, the data lake served as a holding pen for data until it could be copied and loaded into an optimized analytics platform, typically a relational cloud data warehouse feeding either OLAP cubes, proprietary business intelligence (BI) tool data extracts like Tableau Hyper or Power BI Premium, or all of the above. As a result of this processing pattern, data needed to be stored at least twice, once in its raw form and once in its “analytics optimized” form.

Not surprisingly, most traditional cloud analytics architectures look like the diagram below:

As you can see, the “analytics warehouse” is responsible for a majority of the functions that deliver analytics to consumers. The problem with this architecture is as follows:

- Data is stored twice, which increases costs and creates operational complexity.

- Data in the analytics warehouse is a snapshot, which means data is instantly stale.

- Data in the analytics warehouse is typically a subset of the data in the data lake, which limits the questions consumers can ask.

- The analytics warehouse scales separately and differently from the cloud data platform, introducing additional costs, security concerns and operational complexity.

Given these drawbacks, you might ask “Why would cloud data architects choose this design pattern?” The answer lies in the demands of the analytics consumers. While the data lake could theoretically serve analytical queries directly to consumers, in practice, the data lake is too slow and incompatible with popular analytics tools.

If only the data lake could deliver the benefits of an analytics warehouse and we could avoid storing data twice!

The Birth of the Data Lakehouse

The term “Lakehouse” saw its debut in 2020 with the seminal Databricks white paper “What is a Lakehouse?” by Ben Lorica, Michael Armbrust, Reynold Xin, Matei Zaharia, and Ali Ghodsi. The authors introduced the idea that the data lake could serve as an engine for delivering analytics, not just a static file store.

Data lakehouse vendors delivered on their vision by introducing high speed, scalable query engines that work on raw data files in the data lake and expose an ANSI standard SQL interface. With this key innovation, proponents of this architecture argue that data lakes can behave like an analytics warehouse, without the need for duplicating data.

However, it turns out that the analytics warehouse performs other vital functions that are not satisfied by the data lakehouse architecture alone, including:

- Delivering “speed of thought” queries (queries in under 2 seconds) consistently over a wide range of queries.

- Presenting a business-friendly semantic layer that allows consumers to ask questions without needing to write SQL.

- Applying data governance and security at query time.

So, for a data lakehouse to truly replace the analytics warehouse, we need something else.

The Role of the Semantic Layer

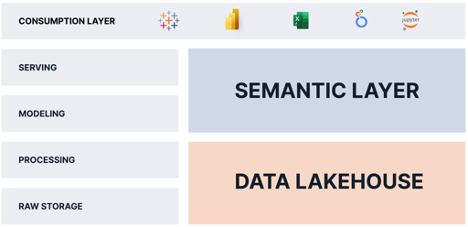

I’ve written a lot about the role of the semantic layer in the modern data stack. To summarize, a semantic layer is a logical view of business data that leverages data virtualization technology to translate physical data into business-friendly data at query time.

By adding a semantic layer platform on top of a data lakehouse, we can eliminate the analytics warehouse functions altogether because the semantic layer platform:

- Delivers “speed of thought queries” on the data lakehouse using data virtualization and automated query performance tuning.

- Delivers a business-friendly semantic layer that replaces the proprietary semantic views that are embedded inside each BI tool and allows business users to ask questions without needing to write SQL queries.

- Delivers data governance and security at query time.

A semantic layer platform delivers the missing pieces that the data lakehouse is missing. By combining a semantic layer with a data lakehouse, organizations can:

- Eliminate data copies and simplify data pipelines.

- Consolidate data governance and security.

- Deliver a “single source of truth” for business metrics.

- Reduce operational complexity by keeping the data in the data lake.

- Provide access to more data and more timely data to analytics consumers.

The Semantic Lakehouse: Everybody Wins

Everybody wins with this architecture. Consumers get access to more fine-grained data without latency. IT and data engineering teams have less data to move and transform. Finance spends less money on cloud infrastructure costs.

As you can see, by combining a semantic layer with a data lakehouse, organizations can simplify their data and analytics operations, and deliver more data, faster, to more consumers, with less cost.