A data lake becomes a data swamp in the absence of comprehensive data quality validation and does not offer a clear link to value creation. Organizations are rapidly adopting the cloud data lake as the data lake of choice, and the need for validating data in real time has become critical.

Accurate, consistent, and reliable data fuels algorithms, operational processes, and effective decision-making. Existing data validation approaches rely on a rule-based approach that is resource-intensive, time-consuming, costly, and not scalable for thousands of data assets. There is an urgent need to adopt a cost-effective data validation approach that is scalable for thousands of data assets.

The Business Impact of Data Quality Issues in a Data Lake

The following examples from Global 2000 organizations demonstrate the need to establish data quality checks on each data asset present in the data lake.

Scenario 1: ETL Jobs Fail to Identify Files in a Data Lake

New subscribers of an insurance company could not avail the telehealth services for more than a week. Here, the root cause was that the data engineering team was not aware of onboarding of the insurance company as a new client and ETL jobs did not pick up the enrollment files that landed in their Azure data lake.

Scenario 2: Trading Company Ingests Data Without Validation

Commodity traders of a trading company could not find the user-level credit information for a certain group of users on a Monday morning – a report was blank – leading to disruptions in trading activities for two hours. The reason was that the credit file received from another application had the credit field empty and was not checked before being loaded to the Big Query.

Scenario 3: Misinformation Due to Poor Preprocessing

Supply chain executives of a restaurant chain company were surprised by the report that consumption in the U.K. doubled in May. The current month’s consumption file was appended to the consumption file from April because of a processing error and stored in the AWS Data Lake.

Current Approach and Challenges

The current focus in cloud data lake projects is on data ingestion, the process of moving data from multiple data sources (often of different formats) into a single destination. After data ingestion, data is moved through the data pipeline, which is where data errors/issues begin to surface. Our research estimates that an average of 30 to 40% of any analytics project is spent identifying and fixing data issues. In extreme cases, the project can get abandoned entirely.

Current data validation approaches are designed to establish data quality rules for one container/bucket at a time – as a result, there are significant cost issues in implementing these solutions for thousands of buckets/containers. Container-wise focus often leads to an incomplete set of rules or often not implementing any rules at all.

Operational Challenges in Integrating Data Validation Solutions

In general, the data engineering team experiences the following operational challenges while integrating data validation solutions:

- The time it takes to analyze data and consult the subject matter experts to determine what rules need to be implemented

- Implementation of the rules specific to each container. So, the effort is linearly proportional to the number of containers/buckets/folders in the data lake

- Existing open-source tools/approaches come with limited audit trail capability. Generating an audit trail of the rule execution results for compliance requirements often takes time and effort from the data engineering team

- Maintaining the implemented rules

Machine Learning-Based Approach for Data Quality

Instead of figuring out data quality rules through profiling, analysis, and consultations with the subject matter experts, standardized unsupervised machine learning (ML) algorithms can be applied at scale to the data lake buckets/containers to determine acceptable data patterns and identify anomalous records. We have had success in applying the following algorithms to detect data errors in financial services and Internet of Things (IoT) data. Several open-source ML software offers these algorithms as part of their packages. These include:

- DBSCAN [1]

- Principal component analysis and Eigenvector analysis [2]

- Association mining [3]

Leverage the anomalous records to measure the data trust score through the lens of standardized data quality dimensions as shown below:

- Freshness: Determine if the data has arrived before the next step of the process..

- Completeness: Determine the completeness of contextually important fields.

- Contextually important fields should be identified using various mathematical and or machine learning techniques.

- Conformity: Determine conformity to a pattern, length, format of contextually important fields.

- Uniqueness: Determine the uniqueness of the individual records.

- Drift: Determine the drift of the key categorical and continuous fields from the historical information.

- Anomaly: Determine volume and value anomaly of critical columns.

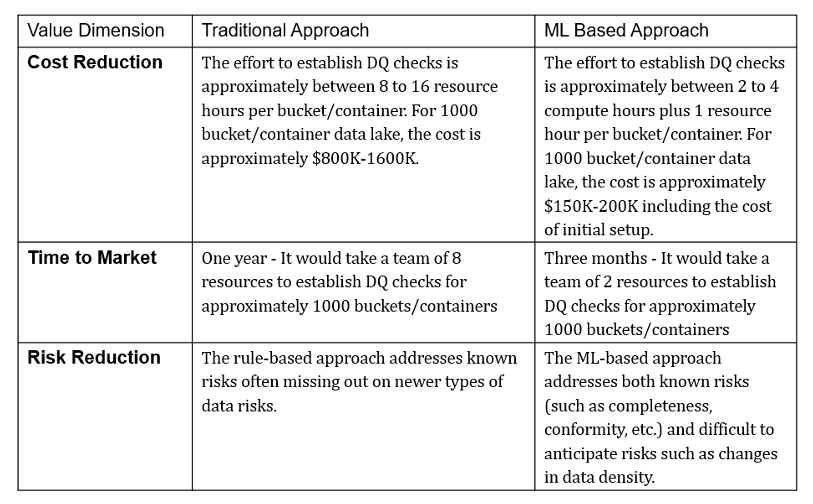

ROI Comparison

The benefits of ML-based data quality fit broadly in two categories: quantitative and qualitative. While the quantitative benefits make the most powerful argument in a business case, the value of the qualitative benefits should not be ignored.

Conclusion

Data is the most valuable asset for today’s organizations. The current approaches for validating data are full of operational challenges leading to trust deficiency, time-consuming, and costly methods for fixing data errors. There is an urgent need to adopt a standardized autonomous approach for validating the cloud data lake to ensure it prevents the data lake from becoming a data swamp.

References

[1] J. Waller, Outlier Detection Using DBSCAN (2020), Data Blog

[2] S. Serneels et al, Principal component analysis for data containing outliers and missing elements (2008), Science Direct

[3] S. B. Hassine et al, Using Association rules to detect data quality issues, MIT Information Quality (MITIQ) Program