DATE: March 15, 2023, This event has passed. The recordings will be made available On Demand within the next week of the live event.

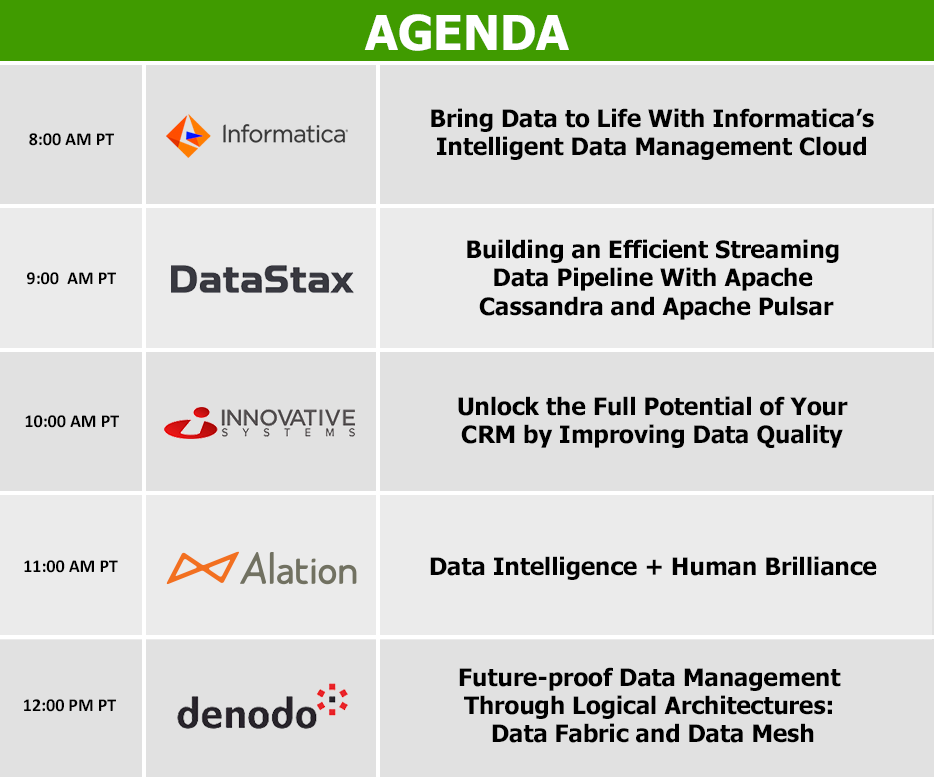

TIME: 8:00 AM – 12:45 PM Pacific / 11:00 AM – 3:45 PM Eastern

PRICE: Free to all attendees

Welcome to DATAVERSITY Demo Day, an online exhibit hall. We’ve had many requests from our data-driven community for a vendor-driven online event that gives you an opportunity to learn more about the available tools and services directly from the vendors that could contribute to your Enterprise Data Management program’s success.

Register to attend one or all of the sessions, or in order to receive the follow-up email with links to the slides and recordings.

Session 1: Bring Data to Life With Informatica’s Intelligent Data Management Cloud

Informatica’s Intelligent Data Management Cloud™ (IDMC) is a comprehensive data management cloud solution that can grow your business with trusted data for analytics and AI, reduce costs and improve process efficiencies with automated workflows, provide a single source of truth for better CX and increase ROI with a modern multi-cloud/hybrid data stack. Get a closer look at Informatica’s enterprise data management capabilities, including data catalog, data integration, API and application integration, data quality, master data management, and data marketplace to name a few.

Session 2: Building an Efficient Streaming Data Pipeline With Apache Cassandra and Apache Pulsar

Event Streaming is one of the most important software technologies in the current computing era as it enables systems to process huge volumes of data in blazingly high speed and in real-time. It is indeed the “glue” that can connect data to flow through disparate systems and pipelines that are typical in cloud environments. Leveraging on the Pub/Sub pattern for the message flow, and designed with the cloud in mind, Apache Pulsar has emerged as a powerful distributed messaging and event streaming platform in recent years. With its flexible and decoupled messaging style, it can integrate and work well with many other modern-day libraries and frameworks.

In this session, we will build a modern, efficient streaming data pipeline using Apache Pulsar and Apache Cassandra. Apache Pulsar will handle the data ingest. The external data that comes in will be used for further processing by Pulsar Functions that will in turn reference the tables in Cassandra as the data lookup sources. The results of the transformed data will then be egressed and sent to an Astra DB sink. We will also examine to see how we can further optimize the entire processing pipeline.

Session 3: Unlock the Full Potential of Your CRM by Improving Data Quality

To realize the benefits of better customer service, increased sales, and improved customer retention that CRM systems can provide, companies must work with data that is free from duplicates, misplaced information, and formatting errors. Poor quality data leads to incorrect conclusions, misinformed decision making, and unhappy customers, yet many companies don’t even know how bad their data is because quality issues are difficult to detect, let alone correct.

Join us to see how Enlighten® can be used to audit data to uncover quality issues, cleanse and standardize data to improve quality, and extend the value of data by validating addresses and finding matches. Whether you are migrating a large volume of data or need real-time data quality, the Enlighten suite of data quality products has the solution. Topics to be covered include:

- Removing duplicates and cleansing data to correct errors

- How a crowdsourced knowledgebase improves speed and accuracy

- Matching and linking records to build stronger relationships

- Validating addresses and geocoding for deeper customer insight

Session 4: Data Intelligence + Human Brilliance

Want to reduce the time spent searching for data so you can increase the time spent leveraging it to make decisions and reduce risk?

Join us at this demo to learn how a data catalog acts as a search engine to unlock potential for success by:

- Organizing data, enabling data discovery, and allowing users to search like librarians — not data scientists

- Overcoming silos so all data in all data sources is visible to all employees at the metadata level

- Becoming even more useful, the more people use it

Session 5: Future-proof Data Management Through Logical Architectures: Data Fabric and Data Mesh

Data fabric and data mesh are two concepts frequently mentioned in conversations surrounding enterprise data management. Over the past two decades, enterprises have managed data by oscillating through cycles of centralization and decentralization. Despite the abundance of options, the conundrum remains — businesses want data to be in one place, and easy to find. Collecting all the data into a single location continues to be a challenge. Data fabric and data mesh designs, powered by data virtualization, can help businesses solve these challenges in different ways.

Attend this webinar to learn to learn from Chris Walters, Sales Engineer and subject matter expert,

- Why a logical architecture holds the key to future of data management

- What are the fundamental principles behind data fabric and data mesh

- How you can build a logical data fabric or data mesh with Denodo Platform