In 2002, internet researchers just wanted a better search engine, and preferably one that was open-sourced. That was when Doug Cutting and Mike Cafarella decided to give them what they wanted, and they called their project “Nutch.” Hadoop was originally designed as part of the Nutch infrastructure, and was presented in the year 2005.

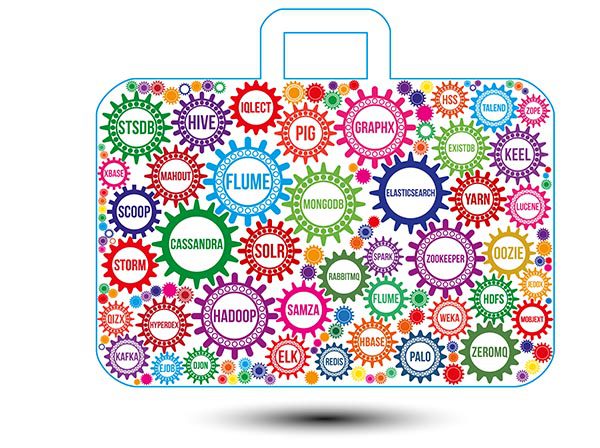

The Hadoop ecosystem narrowly refers to the different software components available at the Apache Hadoop Commons (utilities and libraries supporting Hadoop), and includes the tools and accessories offered by the Apache Software Foundation and the ways they work together. Hadoop uses a Java-based framework which is useful in handling and analyzing large amounts of data. Both the basic Hadoop package and most of its accessories are open-source projects that have been licensed by Apache. The concept of a Hadoop ecosystem includes the different parts of Hadoop’s core, such as MapReduce, the Hadoop Distributed File System (HDFS), and YARN, a Hadoop resource manager.

MapReduce

In the year 2004, Google presented a new Map/Reduce algorithm designed for distributed computation. This evolved into MapReduce, a basic component of Hadoop. It is a Java-based system where the actual data from the HDFS store gets processed. This is a data processing layer designed to handle large amounts of structured and unstructured data. MapReduce breaks down a big data processing job into smaller tasks. This is based on the concept of breaking jobs into multiple independent tasks and processing them one by one. MapReduce has the capability to manage huge data files in parallel.

In the initial “Map” phase, all of the complex logic code is defined. This is a data processing layer designed to process large amounts of structured and unstructured data. In the “Reduce” phase, jobs are separated into small, individual tasks and then managed one at a time. In Hadoop’s ecosystem, MapReduce offers a framework which easily writes applications onto thousands of nodes and analyzes large datasets in parallel before reducing them to find the results. The basic way MapReduce operates is by sending a processing query to the various nodes and then collecting the results for output as a single value.

Hadoop Distributed File System (HDFS)

Apache Hadoop’s big data storage layer is called the Hadoop Distributed File System, or HDFS for short. But, originally, it was called the Nutch Distributed File System and was developed as a part of the Nutch project in 2004. It officially became part of Apache Hadoop in 2006.

Users can download huge datasets into the HDFS and process the data with no problems. Apache Hadoop uses a philosophy of hardware failure as the rule, rather than the exception. An HDFS may use hundreds of server machines, with each server storing part of the system’s data. The large number of servers and their components each have a probability of failure, meaning some component of HDFS will always be non-functional. With this in mind, the detection of faults and a quick, automatic recovery has been a core architectural goal of Apache Hadoop.

The other core aspects of HDFS are:

- Streaming Data Access: Applications running on HDFS require streaming access to the data. These applications are not the general-purpose applications which typically run on “normal” systems. Hadoop Distributed File Systems are designed for batch processing, not for interactive use.

- Large Data Sets: Applications running on HDFS come with large data sets. A file in HDFS is normally in the gigabytes to terabytes range and should provide a high aggregate data bandwidth and scaling to work with hundreds of nodes in a single cluster. HDFS is capable of supporting, in a single instance, several million files.

- Simple Coherency Model: An HDFS application uses a write-once-read-many access model. While appending the content at the end of a file is supported, it cannot be updated at an arbitrary point. This simplifies data coherency issues as well as enabling high throughput data access. MapReduce applications (or web crawling applications) are excellent fits with this model.

- Import/Export Data to and from HDFS: In Hadoop, data can be imported to the HDFS from a variety of diverse sources. After the data has been imported, a necessary level of processing can take place using MapReduce or with a language, such as Hive or Pig. The Hadoop system offers the flexibility to process huge volumes of data while simultaneously exporting processed data to other locations using Sqoop.

Cloudera

Cloudera was started in 2008 and has provided significant support to the Hadoop Ecosystem both in terms of its use and in the development of tools. It was started by three experienced engineers and an Oracle executive. Engineers from Google (Christophe Bisciglia), Yahoo! (Amr Awadallah), and Facebook (Jeff Hammerbacher) joined with Oracle exec Mike Olson to found Cloudera. It is a software company which provides a platform for machine learning, data warehousing, data engineering, and analytics. Cloudera runs in their cloud or can be used for in-house projects.

Cloudera is a sponsor of the Apache Software Foundation. It began as an open-source, hybrid Apache Hadoop distribution system, which focused on enterprise-class deployments of the technology. Cloudera has stated more than 50 percent of its engineering output is donated upstream to the various Apache-licensed open source projects that combine to form the Apache Hadoop platform. Doug Cutting (one of the two original Hadoop developers, and former a chairman of the Apache Software Foundation), joined Cloudera in 2009.

Tools

In the big data industry, the challenge is the volume of data. Some tasks include a count of the distinct IDs taken from log files, transforming stored data for a certain date range, and page rankings. All these tasks can be resolved with various tools and techniques in Hadoop. Some of the more popular tools for developers include:

- Apache Hive: A data analysis tool originally developed by Facebook and released around August, 2008.

- Apache Pig: A data flow language which started as a research project for Yahoo! in 2006 to work with MapReduce. In 2007 it was open-sourced via the Apache incubator. In 2008 the first release of “Apache” Pig came out.

- HBase: A Hadoop database acting as a scalable, distributed, big data store which began as a research project by a business named Powerset. Apache HBase was released in February, 2007.

- Apache Spark: A general engine for processing big data started originally at UC Berkeley as a research project in 2009. Spark was open-sourced in 2010. It moved to the Apache Software Foundation in 2013.

- Sqoop: Used to import data from external sources to related Hadoop components such as HDFS, Hive, or Hbase. It can also export data “from” Hadoop, sending it to other external locations. Sqoop was initially developed and maintained by Cloudera and transferred to Apache in July, 2011. In April, 2012, the Sqoop project became an Apache top-level project.

- Ambari: A Hadoop ecosystem manager developed in 2012 by Hortonworks which helps organize the combined use of various Apache resources.

Apache YARN

YARN is a core part of Hadoop 3.2.0. It can run a variety of Hadoop applications without fear of increasing workloads. This is the operating system for Hadoop. It is responsible for managing workloads, monitoring, and security controls implementation. The component delivers Data Governance tools across various Hadoop clusters. The applications of YARN include batch processing or real-time streaming, etc.

In 2006, Yahoo! adopted Apache Hadoop to replace its WebMap application. During the process, in 2007, Arun C. Murthy noted a problem and wrote a paper on it. Due to other priorities, Apache (and Murthy) waited until 2012 to resolve the problem and created YARN in the process. The basic concept behind YARN is the splitting up of functions for job scheduling/monitoring and resource management. This has resulted in a “per-application” ApplicationMaster and a ResourceManager.

Hortonworks

Hortonworks started as an independent business in June, 2011. The Hortonworks Data Platform is designed to handle data from a variety of sources and formats. Their platform includes all the basic Hadoop technologies and additional components. Hortonworks merged with Cloudera in January, 2019.

Hadoop and Streaming Analytics

The Internet of Things has allowed organizations to use streaming analytics to take real-time actions. IBM Streams and Hortonworks Data Flow are two examples of tools that can be used to add and adjust data sources as needed. With these tools, a person can trace and audit different data paths and adjust data pipelines dynamically with the available bandwidth. These tools allow for the exploration of customer behavior, payment tracking, pricing, shrinkage analysis, consumer feedback, and more. These tools also allow organizations to optimize supply chains, inventory control, customer support, vendor score cards, etc.

In 2010, IBM joined with Columbia University in delivering critical insights significantly faster, by using “streaming analytics.” Medical professionals were able to analyze data with over 200 variables and identify patterns leading to earlier diagnoses. This evolved into a research tool for Hadoop, called IBM Streams.

In 2017, Hortonworks expressed a paradigm shift with the release of Hortonworks Data Flow. Jaime Engesser, VP of product management, said, “Hortonworks is moving from ‘We do Hadoop’ to ‘We do connected data architectures.’ If you look at the streaming analytics space, that’s where we’ve now doubled down.” (Hortonworks Data Flow is still an open-source tool, but is more flexible.)

The open-sourced Hortonworks Streaming Analytics Manager (2017) is a tool used for designing, developing, and managing streaming analytics applications, with the use of drag-and-drop visuals. Users can construct streaming analytics applications capable of creating alerts/notifications, event correlation, complex pattern matching, context enrichment, and analytical aggregations. The Analytics Manager offers immediate insights, using predictive and prescriptive analytics, and pattern matching. Applications for streaming analytics can be built and used within minutes without having to write any code.

Vinod Kumar Vavilapalli, the Vice President of Apache Hadoop stated:

“The Apache Hadoop community continues to go from strength to strength in further driving innovation in Big Data. We hope that developers, operators, and users leverage our latest release in fulfilling their data management needs.”

Image used under license from Shutterstock.com