Data integration uses both technical and business processes to merge data from different sources, helping people access useful and valuable information efficiently. A well-thought-out data integration solution can deliver trusted data from a variety of sources. Data integration is gaining more traction within the business world due to the exploding volume of data and the need to share existing data. It encourages collaboration between internal and external users and makes the data more comprehensive. Without unified data, creating a single report would require logging into multiple accounts, accessing data from native apps, and reformatting and cleansing data before the analysis can start.

A key factor in making data useful and actionable requires it to be available and accessible using a central location. To be fully accessible, the data must be integrated. This is a major principle behind the popularity of data warehouses. Most nonprofit organizations lack the bulk data needed to support a data warehouse but can recognize the value of assuring all useful data is available and accessible.

Data from several sources often needs to be translated and integrated for analytical purposes or operational actions. Staff from every department – and different geographical locations – increasingly require access to data for research purposes. Additionally, staff in each department generate and manipulate data that the rest of the organization needs. Data integration should be a collaborative and unified process that improves communication and efficiency across the organization.

When an organization integrates its data, preparing and analyzing data takes significantly less time. Unified views become automated, rather than completely missing. Staff will no longer have to build connections starting from scratch to create a report or build an application.

Additionally, integrating data by hand can be painfully slow, while using the right tools can save significant amounts of time (and resources) for the development teams. In turn, they can spend that time on other projects, making the organization more competitive and productive.

Reasons for Integrating Data

Integrated data reduces errors and rework. Manually gathering data requires staff to know where each account and data file is located, and they must double-check everything to ensure the data sets are complete and accurate. Additionally, a data integration solution that does not synchronize data must be reintegrated periodically to account for inevitable changes. Automated updates, however, allow reports to synchronize and run efficiently in real time, whenever needed.

Data integration significantly improves the quality of an organization’s data, both immediately and over time. As data moves into the newly organized, centralized system, the issues and problems are identified automatically, and improvements are automatically implemented. Unsurprisingly, this produces more accurate data and analysis. Having all the data stored in one location allows researchers to work remarkably efficiently.

Challenges to Data Integration

Unifying several data sources and merging them into a single structure can be quite difficult. As more and more organizations focus on data integration solutions, they must create processes for moving and translating unintegrated data. Listed below are common challenges organizations face when building integration systems:

- External data sources: The quality of data taken from external sources may not be as good as data from internal sources, making it less trustworthy. Additionally, privacy contracts with external vendors can make sharing data difficult.

- Legacy systems: Data integration efforts may include data stored in legacy systems. This data may, however, be missing markers communicating times and dates of activities, which modern systems normally include.

- Cutting-edge systems: Data from new systems often generate different versions of data (unstructured/real-time) from different kinds of sources (IoT devices, sensors, videos, and the cloud).

- Getting there: Organizations generally integrate their data to accomplish specific goals. The method or route taken should not be a series of responses, but rather a planned-out process. Implementing data integration requires understanding the types of data needing to be gathered and analyzed. Where the data comes from and the types of analysis are also important factors in deciding the best way to integrate the data.

- Up and running: After a data integration system has been installed and is fully functional, there is still work to do. The data team must keep data integration efforts in line with evolving best practices, and deal with the changing needs of the organization and various regulatory agencies.

Methods of Data Integration

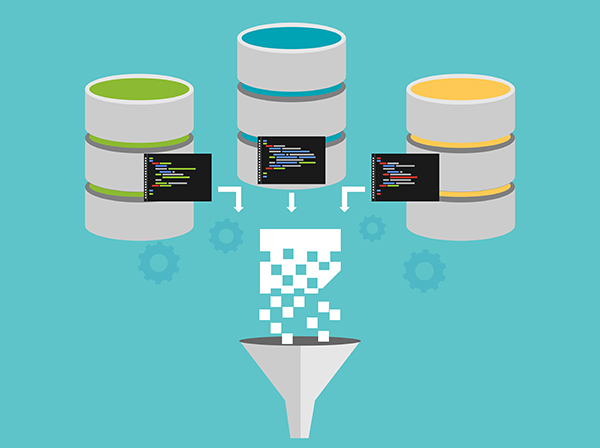

Though there is no standardized approach to integrating data, data integration solutions typically involve a few common elements, including a network of data sources, a master server, and clients accessing data from the master server. Conducting all these operations as efficiently as possible highlights the importance of data integration.

Manual data integration describes the process of a person manually collecting the necessary data from different sources by accessing them directly. The data is cleaned as needed and stored in a single warehouse. This method of data integration is extremely inefficient and makes sense only for small organizations with an absolute minimum of data resources. There is no unified view of the data.

- Middleware data integration acts as a mediator and helps to normalize data before bringing it to the master data pool. Often, older legacy applications don’t work well with newer applications. Middleware offers a solution when data integration systems cannot access data from one of these legacy applications.

- Application-based integration uses software applications to locate, retrieve, and integrate the data. During this integration process, the software makes data from different sources compatible with a centralized system. Data integration software allows organizations to combine and manage all the data coming from multiple sources into a single platform.

- Uniform access integration focuses on developing a translation process that makes data consistent when accessed from different sources. In this situation, however, the data is traditionally left with the original source and only copied to the central database. Using uniform access integration, object-oriented management systems can provide an image of uniformity between different types of databases.

Common storage integration is currently the most popular approach used for storing integrated data. This system stores a copy of the data in the central location and provides a unified view, while the original stays in its local database. (Uniform access leaves data in the source.) Common storage integration supports the underlying principles of traditional data warehousing.

Forms of Data Integration

Data consolidation is a form of data integration with the goal of reducing the number of storage locations. The process of ETL (extract, transform, and load) is a very useful tool for data consolidation. It pulls data from different sources, translates it into a functional format, and then sends it to the newly centralized database or data warehouse. This process filters, cleans, and translates the data, and then applies governance and business rules before storing the data in its new location.

Data propagation uses applications to copy data from a central location and distribute it to other databases for easy access. Copies can be sent asynchronously (at different times) or synchronously. The majority of synchronous data propagation uses two-way communications between the central location and remote databases. EDR (enterprise data replication) and EAI (enterprise application integration) technologies both support data propagation. EAI integrates applications for the easy exchange of data, messages, and transactions. This is typically used for real-time business transactions. (EAI integration platforms are available as a service.) EDR (not an application) is normally used to send large amounts of data to other databases using base triggers and logs to record and share data changes between the central location and remote databases.

Data virtualization uses an interface to integrate data and offers a nearly real-time view of data from different sources. It also offers the option of using different data models. The data, which can be presented at one location, is taken from multiple locations. This process gathers and interprets data without requiring uniform formatting.

Data federation, technically, is a version of data virtualization. It builds a common data model using a virtual database for diverse data from different systems. Data is combined and presented using a single point of access. Data federation can be achieved using a technology called EII (enterprise information integration). This process uses data abstraction to present a unified view of data that comes from different sources. Then, researchers can analyze the data. Data federation and virtualization are good solutions for data consolidation situations that are cost-prohibitive or present dangerous security and compliance issues.

Data warehousing implies cleansing, formatting, and storing data, which is essentially data integration. Data integration efforts – particularly among larger businesses – often get used to build data warehouses, combining multiple data sources to work with a relational database. Data warehouses provide the data needed by researchers running queries and generating analysis.

Image used under license from Shutterstock.com