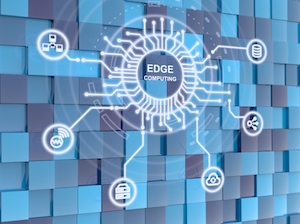

Some Edge Computing Use Cases Include:

- Personalizing the shopping experience

- Running autonomous vehicles (cars and trucks)

- Oil well optimization

- Monitoring home appliances

- Building management systems

- Expanding 5G cellular networks

Other Definitions of Edge Computing Include:

- “A point of transition, a demarcation point where one thing ends and another begins.” (Destiny Bertucci and Patrick Hubbard, DATAVERSITY®)

- A means of allowing “data generated by the IoT to be processed near its source.” (Keith Foote, DATAVERSITY®)

- A “computing topology in which information processing and content collection and delivery are placed closer to the sources of this information.” (Kasey Panetta, Gartner)

- The practice of “processing data closer to where it is created instead of sending it across long routes to data centers or clouds.” (Brandon Butler, NetworkWorld)

Businesses Use Edge Computing to:

- Reduce latency.

- Handle business logic more efficiently.

- Allow the Internet of Things (IOT) to become smarter with analytical insight.

- Support closed networks and rugged environments (e.g., in a factory or plant).

- Provide backup data for a system.

- Analyze and store portions of data quickly and inexpensively.

Photo Credit: BeeBright/Shutterstock.com